I completed my PhD in computer science at the Mobile Robotics Lab at McGill University, supervised by Gregory Dudek and David Meger. My dissertation centers on 3D semantic scene understanding, with a specific focus on camera pose estimation under large viewpoint changes. I now work at the Samsung AI center in Montreal as a research scientist. In my spare time, I work on my aquariums, play the piano, and compose music. My orchestral work (Voices of the Sea) was performed by the Vancouver Symphony Orchestra as part of the Jean Coulthard Readings.

Research Projects

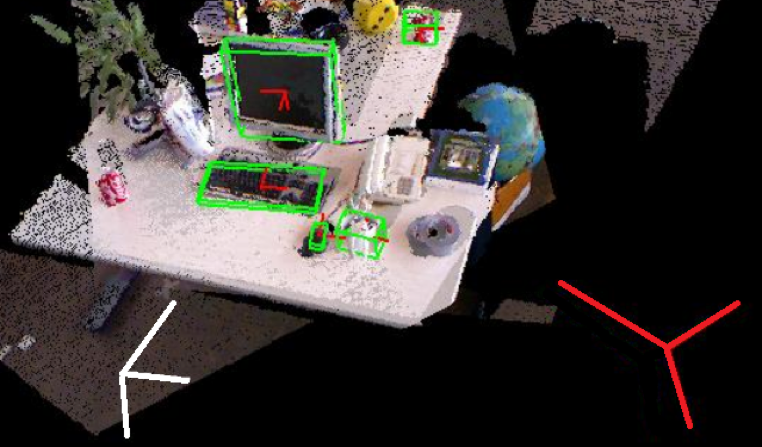

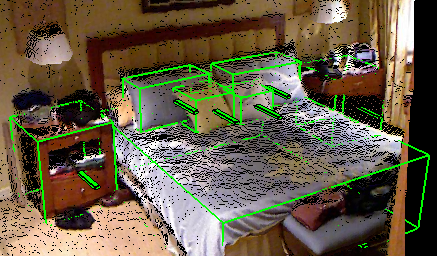

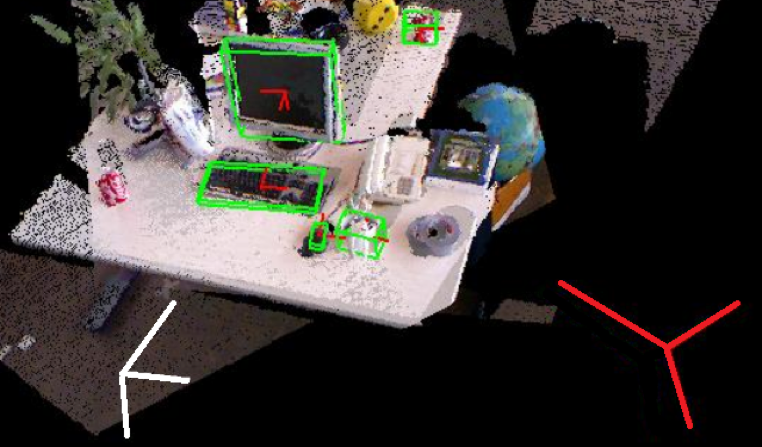

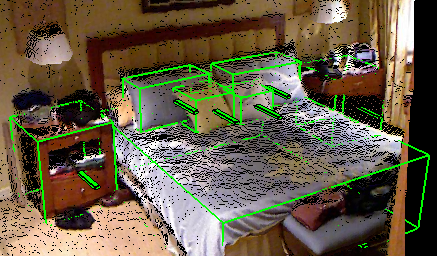

Pose Estimation for Disparate Views

Given RGB images from two far-apart views, I estimate the scale and pose of co-visible objects, while simultaneously estimating the 6-dof camera transformation. Related publications: [ICRA'20] [ICRA'19a] [CRV'18] [IROS'17a]

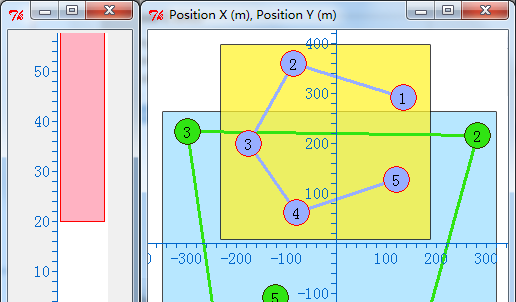

Unsupervised Learning of Semantic Spatial Relationships

Given a set of scenes containing unlabeled object bounding cuboids, our system learns a set of semantically-meaning spatial concepts that match closely with notions like left, right, on, or facing. Related publication: [ICRA'16]

The Aqua Project

I work with Aqua, a family of amphibious hexapod robots. I have contributed to its vision system, the human-robot interaction layer, and have led numerous dives in which we deployed the robot along the coast of Barbados. The picture above shows me diving with Aqua. See project page. Related publications: [JFR'17] [ICRA'19b] [OCEANS'18] [IROS'17b] [FSR'16] .

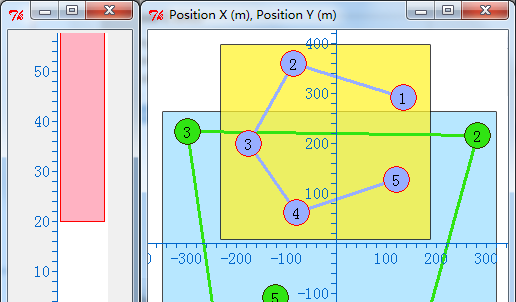

Graphical State Space Programming (GSSP)

GSSP is a framework for robot task specification, which allows the user to graphically specify constrains on the robot's state space, and attach procedural code blocks that execute when constraints are satisfied. This approach simplifies the specification of spatial constraints, while maintaining the expressivity of textual code. See project page and video. Related publication: [ICRA'11]

Publications

Journals

- [JFR'17] T. Manderson, Jimmy Li, N. Dudek, D. Meger, and G. Dudek (2017), Robotic Coral Reef Health Assessment Using Automated Image Analysis. Journal of Field Robotics, 34: 170–187. doi:10.1002/rob.21698

[View]

[Bibtex]

[Data]

Refereed Conference Papers

- [ICRA'20] Jimmy Li, K. Koreitem, D. Meger, and G. Dudek (2020). View-Invariant Loop Closure with Oriented Semantic Landmarks. In Proceedings of the International Conference on Robotics and Automation (ICRA). Paris, France. May 2020.

[PDF]

[Video]

[Bibtex]

- [ICRA'19a] Jimmy Li, D. Meger, and G. Dudek (2019). Semantic Mapping for View-Invariant Relocalization. In Proceedings of the International Conference on Robotics and Automation (ICRA). Montreal, Canada. May 2019.

[PDF]

[Bibtex]

- [ICRA'19b] Karim Koreitem, Jimmy Li, Ian Karp, Travis Manderson, and Gregory Dudek (2019). Underwater Communication Using Full-Body Gestures and Optimal

Variable-Length Prefix Codes. In Proceedings of the International Conference on Robotics and Automation (ICRA). Montreal, Canada. May 2019.

[PDF]

[Bibtex]

- [OCEANS'18] Karim Koreitem, Jimmy Li, Ian Karp, Travis Manderson, Florian Shkurti, and Gregory Dudek (2018). Synthetically Trained 3D Visual Tracker of Underwater Vehicles. MTS/IEEE OCEANS. Charleston, SC, USA. October 2018.

[PDF]

[Bibtex]

- [CRV'18] Jimmy Li, Z. Xu, D. Meger, and G. Dudek (2018). Semantic scene models for visual localization under large viewpoint changes. Conference on Computer and Robot Vision (CRV). Toronto, Canada. May 2018.

[PDF]

[Bibtex]

- [IROS'17a] Jimmy Li and David Meger and Gregory Dudek (2017). Context-coherent scenes of objects for camera pose estimation. In Proceedings of the International Conference on Intelligent Robots and Systems (IROS). Vancouver, Canada. September 2017.

[PDF]

[Bibtex]

- [IROS'17b] F. Shkurti, W. Chang, P. Henderson, M. Islam, J. Higuera, Jimmy Li, T. Manderson, A. Xu, G. Dudek, and J. Sattar (2017). Underwater Multi-Robot Convoying using Visual Tracking by Detection. In Proceedings of the International Conference on Intelligent Robots and Systems (IROS). Vancouver, Canada. September 2017.

[PDF]

[Bibtex]

[Project]

- [ICRA'16] Jimmy Li and David Meger and Gregory Dudek (2016). Learning to Generalize 3D Spatial Relationships. In Proceedings of the International Conference on Robotics and Automation (ICRA). Stockholm, Sweden. May 2016.

[PDF]

[Bibtex]

- [FSR'16] T. Manderson, D. Meger, Jimmy Li, D. Cortés Poza, N. Dudek, and G. Dudek (2015). Towards Autonomous Robotic Coral Reef Health Assessment. Proceedings of Field and Service Robotics (FSR). Toronto, Canada. June 2015.

[PDF]

[Bibtex]

[Data]

- [IROS'12] F. Shkurti, A. Xu, M. Meghjani, J. Higuera, Y. Girdhar, P. Giguere, B. Dey, Jimmy Li, A. Kalmbach, C. Prahacs, K. Turgeon, I. Rekleitis, and G. Dudek (2012). Multi-Domain Monitoring of Marine Environments Using a Heterogeneous Robot Team. In Proceedings of the International Conference on Intelligent Robots and Systems (IROS), Algarve, Portugal. October 2012.

[PDF]

[Bibtex]

- [ICRA'11] Jimmy Li and Anqi Xu and Gregory Dudek (2011). Graphical State Space Programming: A Visual Programming Paradigm for Robot Task Specification. In Proceedings of the International Conference on Robotics and Automation (ICRA), pp. 4846--4853. Shanghai, China. May 2011.

[PDF]

[Bibtex]

[Video]

[Project]