|

CURRENT PROJECTS

|

|

BLIND SUPER-RESOLUTION USING A LEARNING-BASED APPROACH

Super-Resolution is the problem of obtaining a high-resolution version

of one or more low-resolution images. In most super-resolution

approaches, the camera degradation model must be known in advance. In

this project, the goal is to develop a blind super-resolution

algorithm using a supervised learning framework. (Researchers:

Isabelle Bégin and Frank P. Ferrie).

|

|

SPATIOTEMPORAL INDICATORS, MOTION SCALE SPACE,

AND PSYCHOPHYSICAL CORRELATES FOR CONTENT BASED VIDEO INDEXING AND RETRIEVAL

When searching through video archives for particular scenes of

interest, the amount of data is too overwhelming for a human operator

to be usefully parsed. The general field of content based video

indexing aims to make this task manageable by automatically

categorizing and indexing video content. An algorithm to detect and

categorize scenes using psychophysically correlated motion metrics

would enhance the state of the art for this field. (Researchers:

Prasun Lala and Frank P. Ferrie).

|

|

|

PHOTO HULL REGULARIZED STEREO

Generated by a space carving algorithm, the photo hull can at best

serve as a rough model to the scene. Using photo hull as a

regularizer, a regularization-based stereo method is investigated. A

refined depth map is expected because photo hull can help the

stereo algorithm by reducing the search space of the algorithm as

well as to solve the occlusion problem inherent in stereo. (Researchers: Shufei Fan and Frank P. Ferrie).

|

|

|

SENSOR NETWORKS

We imagine the following situation. Given a set of visual sensors

(cameras) with minimal knowledge of their operating parameters, we place these

cameras in an open complex environment. We would like the cameras to

collaborate autonomously, i.e. communicate and exchange information, in order

to derive a fine detailed 3D representation (a.k.a. visual model) of the

complex environment under consideration. (Researchers: Karim Abou-Moustafa and Frank P. Ferrie).

|

|

|

INVESTIGATING THE USE OF PROGRAMMABLE GRAPHICS

CARD TO ACCELERATE COMPUTER VISION ALGORITHMS

More details to come.

(Researchers: Prakash Patel

and Frank P. Ferrie).

|

|

|

VIRTUAL REALITY, OPTIMIZATION OF 3D SCENE DATA

New project! More details to come. (Researchers: John Harrison and Frank P. Ferrie).

|

|

GEOIDE

Intelligent data fusion for aircraft navigation and disaster

management. (Researchers:

Isabelle Bégin, Prasun Lala and Frank P. Ferrie).

|

|

CORIMEDIA

CoRIMedia is an academic research consortium on digital imaging,

video, audio and multimedia processing.

|

|

|

COMBINING DATA FROM DISTRIBUTED RANGE SENSORS (DRMS)

[More details]

|

|

PAST PROJECTS

|

|

ENHANCED AND SYNTHETIC VISION SYSTEM

The Enhanced and Synthetic Vision System (ESVS) is a Canadian

Forces Search And Rescue (CF SAR) Technology Demonstrator

project to help SAR helicopter crews

see in poor visibility conditions.

[More details]

|

|

MONOCULAR CAMERA CALIBRATION

The accurate calibration of monocular cameras is crucial for many

precision vision systems. Accordingly, many techniques have been

introduced to solve this problem, each having its own set of

limitations/requirements. The main intent of our work was to

provide practical guidelines for the implementation and assessment

of 14 state-of-the-art camera calibration methods found in the

technical literature.

|

|

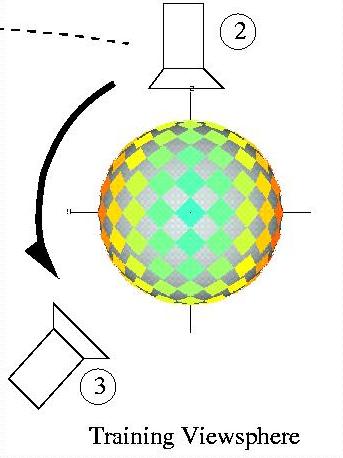

ENTROPY-BASED GAZE PLANNING

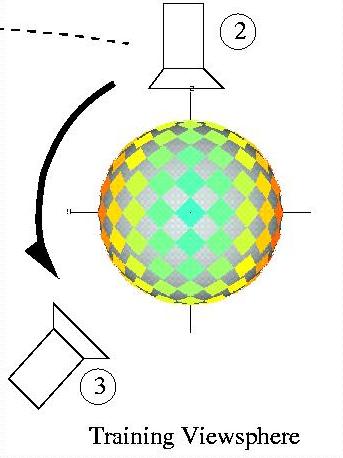

In this work, we introduce the notion of entropy maps,

and show how they can be used to guide an active

observer along an optimal trajectory, by which the

identity and pose of objects in the world can be

inferred with confidence, while minimizing the amount

of data that must be gathered.

[More details]

|

|

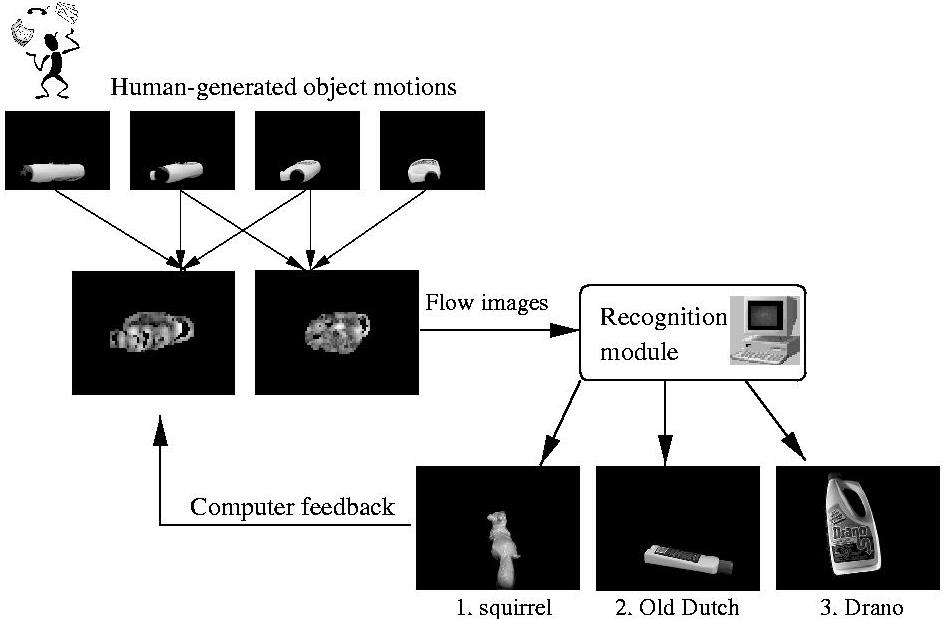

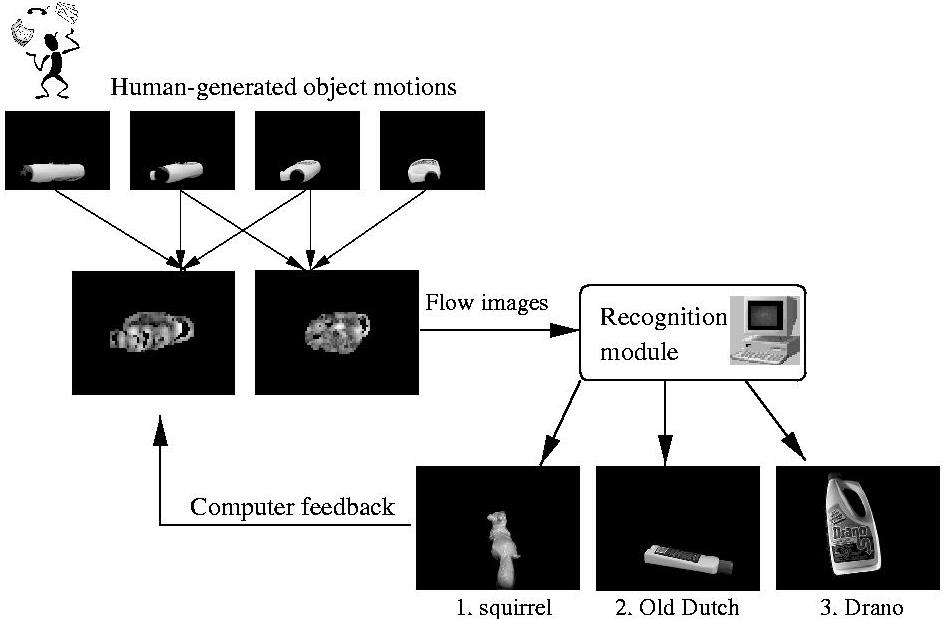

INTERACTIVE VISUAL DIALOG

We have introduced a paradigm called the Interactive

Visual Dialog (IVD) as a means of facilitating a

system's ability to recognize objects presented to it

by a human.

[More details]

|

|

RECOGNIZING OBJECTS FROM CURVILINEAR

MOTION

The premise of this work is twofold: i) that an

object can be recognized on the basis of the optical

flow it induces on a stationary observer, and ii) that

a basis for recognition can be built on the appearance

of flow corresponding to local curvilinear motion.

[More details]

|

|

RANGE DATA

The APL was one of the

first laboratories to own a laser range finder. A

laser range finder is a non-contact

device that can measure the shape of a surface in 3D.

In collaboration with the NRC (National Research

Center), a sophisticated

synchronized laser scanner, with precision of up to

0.1 mm, was developed. Over the

years, the technology has become more accessible and

the APL has developed its own

custom design low-cost range

finder.

[More details]

|

|

MODEL BASED RECOGNITION

Given that the geometric models can be

extracted automatically from range data, it becomes

possible to recognize the parts of

an object. As shown in the right image, the problem

consists of matching a part of a scanned object with

a set of parts already stored in a database. The

belief level of this match is returned and can be

used to perform formal object identification.

|

|

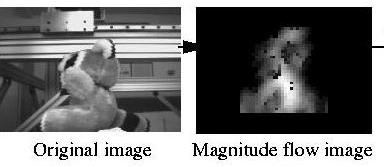

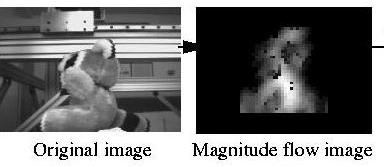

MONOCULAR VIDEO FLOW

More recently, the Artificial Perception

Laboratory has become interested in the computation

of video flow resulting of the

motion of an object on front of a video camera. This

ongoing research has gone

from traditional pixel to pixel image comparison to

more sophisticated frequency

space analysis methods.

[More details]

|

|

IMAGE INDEXING

The quick and accurate indexing of image

databases has become an interesting research topic as the access to large

databases becomes easier. Current indexing

techniques use commonly the appearance

of the object to match them into a databse. These

techniques can easily be fooled by

change in illumination. We study the limits of these fast indexing techniques

and how they can be improved.

|

|

FUSION OF SYNTHETIC AND INFRARED IMAGERY

This research presents the concept of an

Enhanced and Synthetic Vision System (ESVS)

that could help aircraft pilots see in low

visibility conditions. The basic idea is that

synthetic and infrared imagery would be fused

to maximize image content.

|

|

RESEARCH CONTRACTS

|

|

REVERSE ENGINEERING / OBJECT

INSPECTION

In many situations, a CAD model of an existing

object is required for manufactoring or in order

to perform design modifications to an object. In

this example, a prototype of a virtual reality

helmet was digitized for CAE Electronics Ltd.

The top image shows a grid representration of

the final model. On the bottom, an artificially

shaded surface renders the same surface.

|

|

SURFACE INTERPOLATION

A range image is composed of a large number of discrete 3D

points. They are in general sufficient to represent completely the

object. However, the surface between the points is undefined and has

to be interpolated in order to reproduce it with most prototyping

techniques. The NURBS (Non-Uniform Rational B-Spline) representation

are commonly used to perform this interpolation. It has the double

advantage of interpolating the data and reducing the data

substantially. The top figure shows a raw range image of an Indian

mask. The bottom figure shows the same information with a coarse NURBS

grid. This work was done in collaboration with Hymarc Ltd.

|

|

COMPUTER-AIDED SHOE DESIGN

Many people with abnormal feet require custom design shoes. For the

most part, this involves a tedious process of precise measurements and

the intervention of an experienced shoemaker. In this feasibility

study, an attempt to automate the whole process was done. The most

important design parameters can be changed for the customer and

visualized immediatly. The top figure shows the heel height selection

and the bottom figure shows the toebox shaping. The study was done in

collaboration with Telemars Recherche et Developpement Inc.

|

|

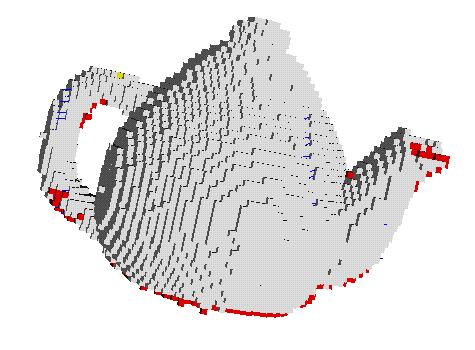

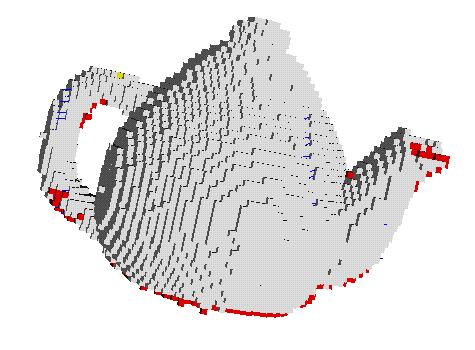

OBJECT DIGITIZATION

With current non-contact scanner technology the dense digitization of

complex objects can be done relatively quickly and to a high level of

precision (25 mm). The process is in general totally left to the

expert hands of an operator and the assessment of the overall quality

of the surface coverage is mostly subjective. Using the design

developed at the APL indicates that any holes on the surface of an

object (red cubes on the top figure) can be detected reliably and can

provide a completion criterion to the digitization process. The bottom

figure shows the scanner path followed to perform full surface

coverage. This work was done for Hymarc Ltd.

|

|

LOCALIZATION OF HIDDEN OBJECT

PARTS

In hostile environments, such as in space or in nuclear plants, human

intervention must kept as low as possible. Computer vision and robot

teleoperation must be tightly coordinated in order to perform the

complex tasks efficiently. The number of vision sensors is limited and

some objects will inevitably be hidden or occluded. Any action on

these objects requires that their location be computed precisely from

their relative position to the visible objects. The left figure shows

a test object where three components where used to deduce the location

of two targets in the right image. This work was done for

Hymarc Ltd.

|

|

BIOMEDICAL APPLICATION

The Artificial Perception Laboratory has done many collaborations with

other departments inside McGill University

and hospitals to acquire and process range data required for various statistical

analysis. The left image shows a collaboration to a project where the curvature

of the bones of the knee was modelled and then compared to real data in order

to produce artifical implants. The right image shows a similar project where

the surface of 229 vertebraes was scanned to evaluate the average dimensions

for various age groups.

|

|

CIVIL ENGINEERING COLLABORATION

In collaboration with the McGill Civil Engineering

Department, the surface of many large granite rocks

(3 feet diameter) was scanned to measure the effect

of high pressure water infiltration in rock

fractures. The surface of the rock was scanned right

after the rupture (top picture) and compared with a

scan of the same rock after putting the two parts

back together and applying high force constrain and

water pressure in the fracture. The bottom image

shows the color-coded surface difference of the

surface "before" and "after".

|